Link:https://www.soa.org/digital-publishing-platform/emerging-topics/getting-started-with-julia/

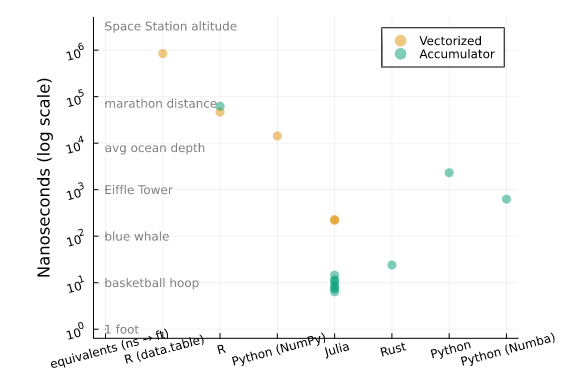

Graphic:

Excerpt:

Sensitivity testing is very common in actuarial workflows: essentially, it’s understanding the change in one variable in relation to another. In other words, the derivative!

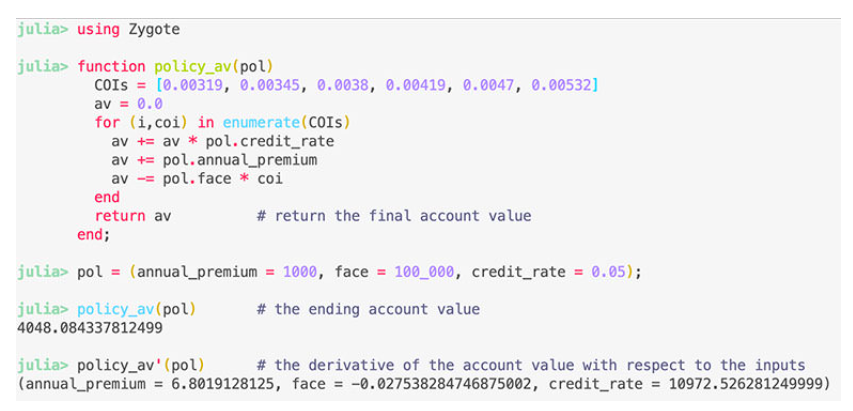

Julia has unique capabilities where almost across the entire language and ecosystem, you can take the derivative of entire functions or scripts. For example, the following is real Julia code to automatically calculate the sensitivity of the ending account value with respect to the inputs:

When executing the code above, Julia isn’t just adding a small amount and calculating the finite difference. Differentiation is applied to entire programs through extensive use of basic derivatives and the chain rule. Automatic differentiation, has uses in optimization, machine learning, sensitivity testing, and risk analysis. You can read more about Julia’s autodiff ecosystem here.

Author(s): Alec Loudenback, FSA, MAAA; Dimitar Vanguelov

Publication Date: October 2021

Publication Site: SOA Digital, Emerging Topics