Graphic:

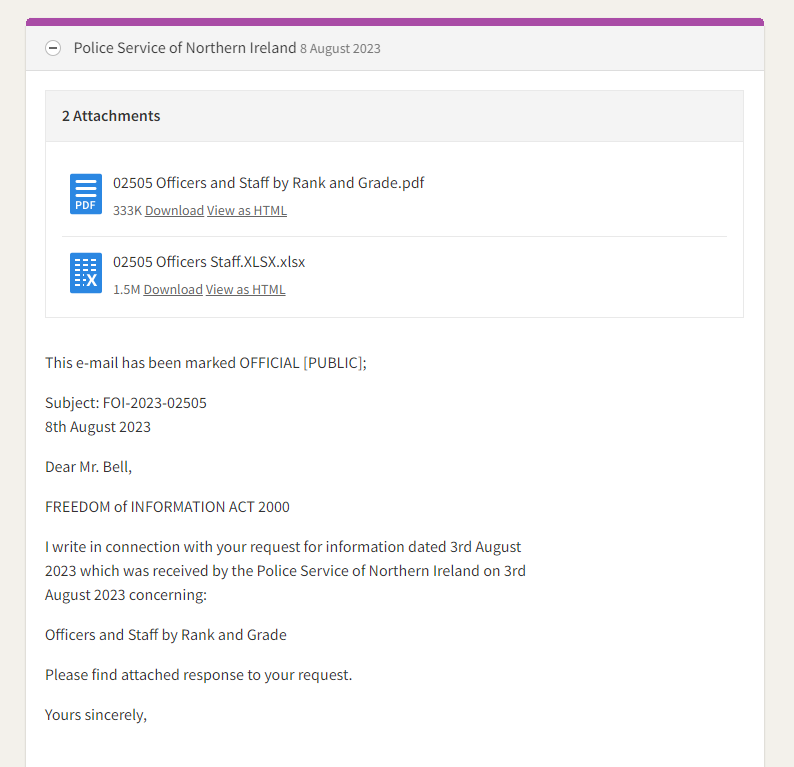

Additional: https://twitter.com/owenboswarva/status/1688975359604101129/photo/1

Excerpt:

However, a second tab in the spreadsheet contained multiple entries in relation to more than 10,000 individuals. For each individual, there are 32 pieces of data meaning that in total, there are about 345,000 pieces of data in the file.

The spreadsheet, which has been seen by the Belfast Telegraph after we were alerted to it by a relative of a serving officer, includes each officer’s service number, their status, their gender, their contract type, their last name and initials, details of how much of the week they work, and their rank.

It also includes the location where they are based (but not their home address), their duty type (from chief constable to detective, intelligence officer and so on), details of their unit (such as the anti-corruption unit or the vetting department), their branch and department, and other technical information about their employment.

There are 10,799 entries in the database. There are 9,276 police officers and police staff. It is not clear if the additional entries relate to other employees or former employees.

The data has been removed from the internet.

There are details of staff who are suspended, on career breaks, or partly retired.

It reveals members of the organised crime unit, telecom liaison officers, intelligence officers stationed at ports and airports, PSNI pilots in its air support unit, officers in the surveillance unit and – of acute sensitivity – almost 40 PSNI staff based at MI5’s headquarters in Holywood.

There are a tiny number of individuals whose unit is given as “secret”. But although that does not disclose precisely what they do, it marks them out as operating in an acutely sensitive area – and then gives their name.

Author(s): Sam McBride and Liam Tunney

Publication Date: 8 Aug 2023

Publication Site: Belfast Telegraph