Link: https://juliaactuary.org/blog/julia-actuaries/

Excerpt:

Looking at other great tools like R and Python, it can be difficult to summarize a single reason to motivate a switch to Julia, but hopefully this article piqued an interest to try it for your next project.

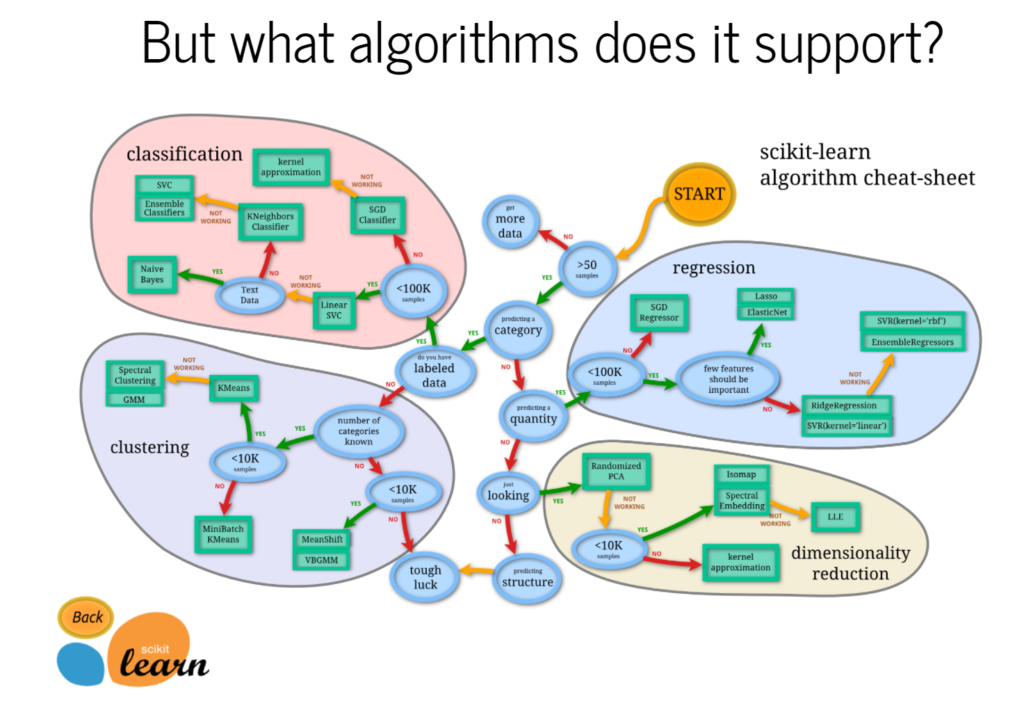

That said, Julia shouldn’t be the only tool in your tool-kit. SQL will remain an important way to interact with databases. R and Python aren’t going anywhere in the short term and will always offer a different perspective on things!

In an earlier article, I talked about becoming a 10x Actuary which meant being proficient in the language of computers so that you could build and implement great things. In a large way, the choice of tools and paradigms shape your focus. Productivity is one aspect, expressiveness is another, speed one more. There are many reasons to think about what tools you use and trying out different ones is probably the best way to find what works best for you.

It is said that you cannot fully conceptualize something unless your language has a word for it. Similar to spoken language, you may find that breaking out of spreadsheet coordinates (and even a dataframe-centric view of the world) reveals different questions to ask and enables innovated ways to solve problems. In this way, you reward your intellect while building more meaningful and relevant models and analysis.

Author(s): Alec Loudenback

Publication Date: 9 July 2020

Publication Site: JuliaActuary